Tuesday, 10 December 2019

Program a Delphi Collapsible Outliner - Deleting Nodes

If you've been following my YouTube series on using Delphi to create a Collapsible Outliner, you'll be interested in the latest lesson which explains how to delete nodes (and child nodes).

The source codes for all the projects in this series is available from the Bitwise Books web site. Just sign up for our newsletter and we'll send you the download links: http://www.bitwisebooks.com

Friday, 29 November 2019

Learn To Program A Text Adventure Game

Looking for the perfect Christmas present for the programmer who “has everything”? May I suggest my newest book, The Little Book Of Adventure Game Programming.

The Little Book Of Adventure Game Programming provides a step-by-step guide to creating a game in C#, which is one of the most important languages on Windows and is also available on macOS and Linux. The programming principles and techniques explained in the book can also be used to write adventure games in other languages such as Java, Ruby or Object Pascal. Short examples (source code also available for download) are provided in those languages. As well as teaching adventure games specifically, this book can also be used as a tutorial to writing C# programs. It covers all the most important features of the C# language.

This book explains...

Available in paperback or for Kindle from Amazon (US): https://www.amazon.com/dp/1913132080 and (UK): https://www.amazon.co.uk/dp/1913132080

The Little Book Of Adventure Game Programming provides a step-by-step guide to creating a game in C#, which is one of the most important languages on Windows and is also available on macOS and Linux. The programming principles and techniques explained in the book can also be used to write adventure games in other languages such as Java, Ruby or Object Pascal. Short examples (source code also available for download) are provided in those languages. As well as teaching adventure games specifically, this book can also be used as a tutorial to writing C# programs. It covers all the most important features of the C# language.

This book explains...

- How to write Interactive Fiction (IF) games

- Creating class hierarchies

- How to create a map of linked 'rooms'

- Moving the player around the map

- Adding treasures to rooms

- How to take and drop treasures

- Putting objects into containers (sacks, treasure chests etc.)

- Using lists and dictionaries

- Overridden methods

- Overloaded methods

- How to save and load games

- Designing a game with a user interface

- Designing a command-line game to run at the system prompt

- ...and much more

Available in paperback or for Kindle from Amazon (US): https://www.amazon.com/dp/1913132080 and (UK): https://www.amazon.co.uk/dp/1913132080

Tuesday, 26 November 2019

Customize the Colours of the Visual Studio Code Terminal

I love Microsoft's free multi-language programming editor but I've never found the perfect colour scheme because I'm a traditionalist and like the Terminal to have a black background with green text. In fact, you can change the Terminal to use any colours you like - as I show in this video.

Tuesday, 12 November 2019

Learn to Program Ruby FREE

I'm starting a new Ruby programming series on YouTube. The first video went online today. More to follow soon. Be sure to subscribe to my YouTube channel to get notification of new lessons.

Monday, 11 November 2019

Arrays, Addresses and Pointers in C Programming

What's the difference between this:

char s[] = "Hello";

and this...?

char *s = "Hello";

Aren't they just two ways of doing the same thing? That is, of assigning the string "Hello" to the string variable s? Well, no. In fact, these two assignments are very different things. If you have moved to C from another language such as Java, Python, C# or Ruby, making sense of arrays, strings, pointers and addresses is likely to be one of the biggest challenges you'll face. Here's a video I made that should help out.

For a more in depth guide to C programming, see my book, The Little Book Of C:

Amazon (US): https://amzn.to/2RXwA6a

Amazon (UK): https://amzn.to/2JhlwOA

For a full explanation of pointers, see The Little Book Of Pointers:

Amazon (US): https://amzn.to/2LF2aVb

Amazon (UK): https://amzn.to/2FViSvS

char s[] = "Hello";

and this...?

char *s = "Hello";

Aren't they just two ways of doing the same thing? That is, of assigning the string "Hello" to the string variable s? Well, no. In fact, these two assignments are very different things. If you have moved to C from another language such as Java, Python, C# or Ruby, making sense of arrays, strings, pointers and addresses is likely to be one of the biggest challenges you'll face. Here's a video I made that should help out.

For a more in depth guide to C programming, see my book, The Little Book Of C:

Amazon (US): https://amzn.to/2RXwA6a

Amazon (UK): https://amzn.to/2JhlwOA

For a full explanation of pointers, see The Little Book Of Pointers:

Amazon (US): https://amzn.to/2LF2aVb

Amazon (UK): https://amzn.to/2FViSvS

Friday, 8 November 2019

Use the Delphi TreeView to Program a Collapsible Outliner

Here is the latest lesson on creating an outlining tool with Object Pascal and Delphi:

Be sure to subscribe to my YouTube channel in order to be notified whenever a new lesson is uploaded: https://www.youtube.com/BitwiseCourses?sub_confirmation=1

Wednesday, 16 October 2019

Learn To Program Delphi and Object Pascal

I'm starting a new YouTube series on Delphi programming. In the course of the series I will explain how to design and code a collapsible outliner utility (for brainstorming, TODO lists, password management, project planning and so on).

Eventually my outliner will let you create trees of headings and subheadings, you will be able to drag and drop branches to reposition them and even attach formatted notes to each branch. If you’ve never programmed Delphi before this will be a great way to discover this wonderful development tool for Object Pascal programming. If you already program in Delphi, it will give you an insight into using the TreeView component and saving and loading complex data to and from disk. And, best of all, you’ll end up creating a genuinely valuable tool.

Be sure to subscribe to my YouTube channel in order to be notified whenever a new lesson is uploaded: https://www.youtube.com/BitwiseCourses?sub_confirmation=1

Eventually my outliner will let you create trees of headings and subheadings, you will be able to drag and drop branches to reposition them and even attach formatted notes to each branch. If you’ve never programmed Delphi before this will be a great way to discover this wonderful development tool for Object Pascal programming. If you already program in Delphi, it will give you an insight into using the TreeView component and saving and loading complex data to and from disk. And, best of all, you’ll end up creating a genuinely valuable tool.

In this first lesson, I give an overview of Delphi and tell you how you can download a free copy.

Be sure to subscribe to my YouTube channel in order to be notified whenever a new lesson is uploaded: https://www.youtube.com/BitwiseCourses?sub_confirmation=1

Monday, 7 October 2019

What are C# Generic Collections?

Baffled by generic lists and dictionaries in C# (C-Sharp)? I hope this short video may help.

Thursday, 3 October 2019

The Little Book Of C# Programming

I was very pleased to see that my book on C# programming is listed Number 1 in several categories on Amazon today. It won't last, I'm sure, but it's very gratifying all the same...

The Little Book Of C# is available as a paperback or Kindle eBook from Amazon.com, Amazon.co.uk and other international Amazon stores.

The Little Book Of C# is available as a paperback or Kindle eBook from Amazon.com, Amazon.co.uk and other international Amazon stores.

Tuesday, 24 September 2019

Cyberlink PowerDirector 18 Review

PowerDirector 18 Ultimate £99.99 / $129.99

Subscription plans also available starting at £5/month

https://www.cyberlink.com/products/powerdirector-video-editing-software-ultimate/

If you are looking for video editing software capable of professional results but without a professional price tag, PowerDirector is hard to beat. It is packed with powerful recording and editing features and outputs or ‘produces’ videos blazingly fast. When I reviewed PowerDirector 17, last year, I wrote “While it is priced towards the hobbyist end of the market, don’t be fooled into thinking it is for amateurs only. In fact, it now has an excellent range of pro-level features. For the serious video editor on a tight budget, PowerDirector is my top recommendation.”

This month sees the release of PowerDirector 18. This version adds a range of new features, (see What’s New In PowerDirector 18). It has ‘audio scrubbing’ which means it plays back audio as you move the playhead over a track so that you can more easily find a specific location (for example, where the subject says a certain word). It makes it easy to create perfectly square videos, suitable for some social media sites, additional file format support for pro cameras, an improved title designer to create and animate titles(see example), it has the ability to undock the media library and timeline (handy if you are using more than one monitor) plus a variety of interface and usability improvements such as hotkey customisation, snap-alignment of objects and a shape designer for adding vector shapes to videos. These are all, in my opinion, fairly small changes – there are no huge new ‘gee-whiz’ features – but cumulatively they combine to make worthwhile improvements to PowerDirector.

The new features are just the icing on then cake, however. PowerDirector is already packed with a range of excellent features from its previous editions (see my reviews of PowerDirector 17 and PowerDirector 16) including camera and screencast capture, powerful multi-track editing, a huge range of effects, transitions and colour correction tools, split-screen videos, particle effects, audio editing and synchronization and much more.

It has a few irritating features too. For example, its video editing tools are spread about in a variety of different places – to change the colours and lens effects, you can either load up some pages containing scrollbars from the Fix/Enhance menu or you can apply presets from the Effects library. To crop and zoom you can either select a popup Cross/Zoom window from the PowerTools menu or you can load up the PiP (Picture In Picture) designer and do it there. The PiP designer is also where you apply Chroma Key (to remove green-screen backgrounds) and make other adjustments to video clip animations. To be honest, I find that there are so many menus, dialog boxes, drag-and-drop effects and popup video editing panels that I often forget which one I need to use in order to make the edits I want to make.

Another peculiarly annoying idiosyncrasy is that when you unlink the audio from a video track then move the audio relative to the track (say to synchronize sound and video) and then relink or group the two tracks, you can no longer load the video into the PiP editor. In order to do that, you have to unlink the tracks all over again, do the desired edits, then relink them. Similarly, if you group audio and video and then split the clip, you can’t delete one side of the split without first ungrouping the clips, then doing the deletion, then regrouping them.

Whether you are making pro-grade 4K videos, online educational videos or just simple YouTube videos, PowerDirector 18 is a great program at a very reasonable price.

Subscription plans also available starting at £5/month

https://www.cyberlink.com/products/powerdirector-video-editing-software-ultimate/

If you are looking for video editing software capable of professional results but without a professional price tag, PowerDirector is hard to beat. It is packed with powerful recording and editing features and outputs or ‘produces’ videos blazingly fast. When I reviewed PowerDirector 17, last year, I wrote “While it is priced towards the hobbyist end of the market, don’t be fooled into thinking it is for amateurs only. In fact, it now has an excellent range of pro-level features. For the serious video editor on a tight budget, PowerDirector is my top recommendation.”

|

| PowerDirector 18 is a fully-featured video editing suite |

|

| If you have two monitors, you can dock the media panel and timeline on one monitor (here they are on my left screen) and show the video preview on the other monitor (here on the right) |

|

| You can create your own vector shapes and callouts, change the colours and even add animation. These are then saved to the library for easy use in your projects |

Another peculiarly annoying idiosyncrasy is that when you unlink the audio from a video track then move the audio relative to the track (say to synchronize sound and video) and then relink or group the two tracks, you can no longer load the video into the PiP editor. In order to do that, you have to unlink the tracks all over again, do the desired edits, then relink them. Similarly, if you group audio and video and then split the clip, you can’t delete one side of the split without first ungrouping the clips, then doing the deletion, then regrouping them.

Monday, 23 September 2019

Saving local variable values from Recursive Function-calls

The values of local variables inside functions that are called recursively are lost when the recursion unwinds. How can you save those values? This video explains.

I have written a book about recursion, “The Little Book Of Recursion”. You can find more about that here: http://bitwisebooks.com/books/little-book-of-recursion/

You can buy The Little Book Of Recursion from Amazon:

Amazon (US) https://amzn.to/2JjrJtq

Amazon (UK) https://amzn.to/2YCYx5N

Or search for its ISBN: 978-1913132057

Friday, 20 September 2019

What are Stack Frames and The Call Stack?

My latest YouTube video gives a very quick overview of what stack frames are and why programmers need to understand them...

Thursday, 19 September 2019

Camtasia 2019 Review

Camtasia 2019 £239

https://www.techsmith.comThe venerable screencasting software continues to be improved. But does it still lead the field…?

Camtasia is probably the best known screencasting software for Windows and it is also one of the top screencasting products for the Mac. If you are making educational courses or YouTube videos that need recordings from your computer screen, or if you are creating software demos, Camtasia is a great tool. It lets you capture animated videos of all the action on your computer screen. Alternatively, you can capture from a selected window or from a rectangular area marked off with your mouse.

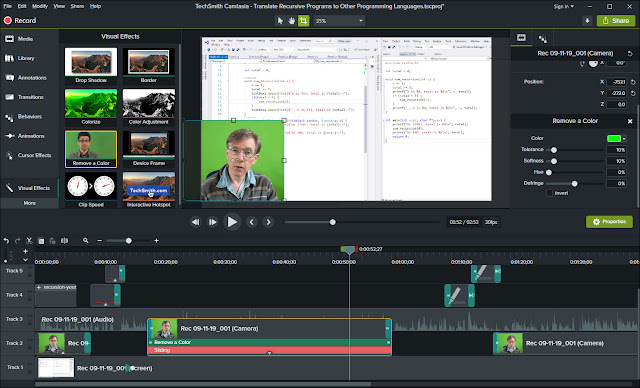

|

| The Camtasia 2019 editing environment shows a video preview above a multi-track timeline with panels of media clips, effects, transitions and more at the top-left. |

The Camtasia editing environment takes the form of a multi-track timeline beneath a video editing preview window and some panels from which clips, effects, transitions and other elements can be selected. On the timeline you can place numerous video and audio clips as well as still images. A fade-transition from one clip to another can be done just by overlapping the adjoining clip edges. Or, if you want fancier transitions, you can select page-rolls, Venetian-blind effects, random dissolves, glows and so on just by dragging those effects from a ‘Transitions’ panel.

There is also a Library. This was one of the big improvements made to the last version (Camtasia 2018). The Library is a panel where you can store video and audio clips, images and ‘callouts’ (bits of text or animated intros and credits) You can have several named libraries which can be selected from a drop-down list.

What’s New?

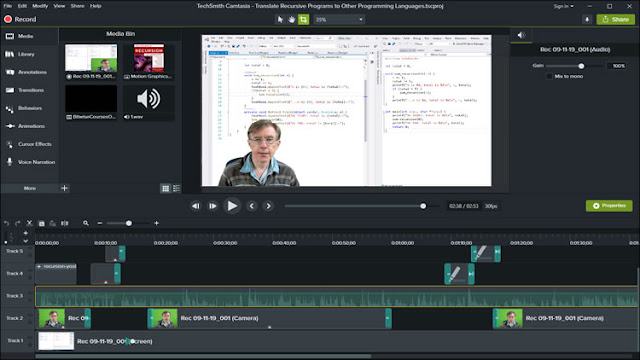

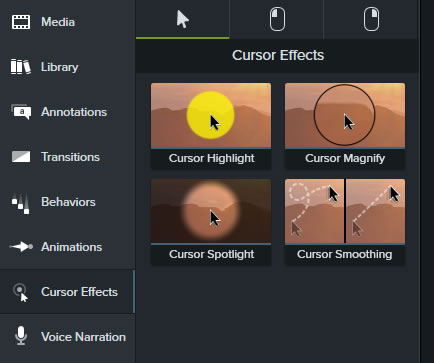

So what’s new in this version? The truth is, very little. According to TechSmith, the principal new features are Automatic Audio Levelling, Cursor Smoothing, the ability to customise shortcuts, download device frames and improved downloading of assets. The last three of these features are so trivial that I’ll describe them in a single paragraph. Here goes…

|

| You can customise the hotkeys throughout the application using the shortcuts dialog. |

Exploring a little further (using the comparison chart https://www.techsmith.com/camtasia-compare-versions.html), I see that there are a few more small changes such as some extra text properties and PDF import. These are so slight that TechSmith doesn’t even bother to mention them, in its ‘What’s New?’ page: https://support.techsmith.com/hc/en-us/articles/360023972011-What-s-New-in-Camtasia-2019

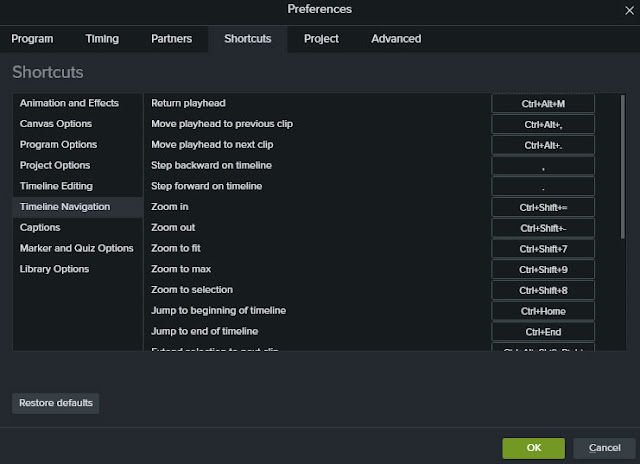

The only two remaining new features worth highlighting are automatic audio levelling and cursor smoothing. The audio-levelling capability is genuinely useful. It lets you add multiple audio or video recordings and, if the volume levels vary, it instantly regularises them. Of course, you could do this yourself – it would only take a few seconds per clip – but having it done for you is easier and more reliable. As long as the ‘auto normalize loudness’ option is selected for your project, you can just drop on clips and the software will automatically ‘correct’ low volume clips so that each clips sounds equally loud.

The auto-levelling feature can, in fact, be a bit unpredictable in some circumstances. I’ve found that when I create a project with the feature enabled, it stays enabled even when I subsequently disable the option. That means that the audio of any clips I add continues to auto-adjust even though I don’t want it to. This behaviour does not affect new projects that have the option disabled from the outset.

|

| If your screen recordings suffer from cursor-waggle, you can now smooth out the movements with a new cursor effect |

Is it worth it?

Camtasia is fairly frequently updated and this latest version strikes me as a relatively minor update. The last really big new version of Camtasia (in my opinion) which substantially improved the software with numerous new features and a significant new redesign was Camtasia 8, way back in 2012 (read my review http://bitwisemag.co.uk/2/Camtasia-Studio-8.html). Camtasia 9 in 2016 (see my review http://www.bitwisemag.com/2016/10/camtasia-9-for-windows-3-for-mac-review.html) defined the look-and-feel of the software ever since. Camtasia 2018 was an incremental update with numerous useful improvements but no huge new features (http://www.bitwisemag.com/2018/07/camtasia-2018-review.html). So is this new version of Camtasia good enough to make Camtasia my first choice for screencasting?

The short answer is yes but with caveats. I would generally recommend Camtasia to anyone who regularly makes screencasts on Windows. The Mac edition is also pretty good but faces strong completion from ScreenFlow. In spite of relatively few additions to Camtasia 2019, this software remains my favourite Windows screen recorder for the simple reason that it is so quick and easy to use.

If you are on a tight budget, however, or if you want a more ’general purpose’ video editor you night think about opting for a program such as Cyberlink PowerDirector or Movavi Video Suite, both of which include screen capture tools.

If you are an existing Camtasia user, you probably want to know whether this new edition justifies the cost of upgrading. I would have to say probably not. The upgrade from Camtasia 2018 to 2019 costs £95.64. Frankly, you won’t get much for your money. The improvements in Camtasia 2019 are quite minor. So, in summary: Camtasia 2019 is a great screen recorder and editor. But the new features in this edition are underwhelming.

Monday, 16 September 2019

Learn to Program C# (C-Sharp) with a FREE 4 Hour Online Course

I have just released a completely free online course on the C# language. This course used to sell for $99 but from today you can sign up for free. It contains 33 video lessons (over four hours of instruction) pus an eBook and all the source code of the sample projects. Sign up here: https://bitwisecourses.com/p/learn-to-program-csharp

If you want to get started with C# programming on Windows (using a free copy of Visual Studio), this is the course you need!

Incidentally, if you prefer to learn from a book or you need a book to take you deeper into the subjects covered in my course, my book, The Little Book Of C# is available in paperback or for Kindle on Amazon (US) https://amzn.to/2JWDI0o, Amazon (UK) https://amzn.to/2YaCPtS and worldwide.

If you want to get started with C# programming on Windows (using a free copy of Visual Studio), this is the course you need!

Incidentally, if you prefer to learn from a book or you need a book to take you deeper into the subjects covered in my course, my book, The Little Book Of C# is available in paperback or for Kindle on Amazon (US) https://amzn.to/2JWDI0o, Amazon (UK) https://amzn.to/2YaCPtS and worldwide.

Friday, 16 August 2019

The Little Book Of Ruby Programming

My new book on Ruby programming is published today, available from Amazon (US), Amazon (UK) and worldwide (ISBN: 978-1913132071).

I originally wrote The Little Book Of Ruby way back in 2006. My software development company was creating some Ruby development tools at the time and we really needed a quick way to learn the basic features of the Ruby programming language. Hence The Little Book. This latest edition has been considerably updated, expanded and reformatted and there is also a downloadable source code archive of all the programs in the book. It is the first time I’ve released The Little Book Of Ruby in paperback and Kindle eBook format. If you are new to Ruby and want a really quick way to start programming, I hope this may be of use.

I originally wrote The Little Book Of Ruby way back in 2006. My software development company was creating some Ruby development tools at the time and we really needed a quick way to learn the basic features of the Ruby programming language. Hence The Little Book. This latest edition has been considerably updated, expanded and reformatted and there is also a downloadable source code archive of all the programs in the book. It is the first time I’ve released The Little Book Of Ruby in paperback and Kindle eBook format. If you are new to Ruby and want a really quick way to start programming, I hope this may be of use.

Wednesday, 14 August 2019

Smalltalk – The Most Important Language You’ve Never Used

In my last two posts I mentioned two programming languages with big ideas (Modula-2 and Prolog) which, however, never ranked among the dominant mainstream languages. Now I want to look at one of the most influential languages ever created – Smalltalk.

It would be hard to overstate the influence of Smalltalk. Without Smalltalk there would have been no Mac and no Windows. In all probability you would not now have a mouse attached to your computer, networking might have come around eventually but not as quickly as it did - and object orientation might never have made it to the mainstream.

|

| This is Smalltalk/V - a Windows based Smalltalk from the 1980s. |

Most of the current generation of OOP languages take some bits of Smalltalk (such as classes and methods), miss out other bits (such as data-hiding, a class-browsing IDE, image-saving, the ‘message passing’ paradigm etc.) and add on a few things of their own – such as C++’s multiple inheritance. The end result is that those languages are less simple, consistent and coherent than Smalltalk. One of the proud boasts of the developers’ of Pharo (one of the best modern Smalltalk implementations) is that the entire language syntax can be written on a postcard. Try doing that with C++!

|

| Dolphin Smalltalk - a modern Windows-based implementation |

When I read programming forums on the Internet these days, the main feature which is praised by enthusiastic programmers is the speed with which they can write programs. In fact, the more programming I do (I’ve been at it since the early ’80s), the more I become convinced that the most important thing is not the speed with which I can write programs but the speed with which I can debug them. The simpler the code, the easier it is to debug.

Debugging is the partner to maintaining. A great many programmers now think it’s neat to contribute a ‘hack’ to some programming project then move on to something else – leaving some other sucker to try to maintain and fix the increasingly incomprehensible code at some future date. For many programmers, debugging and maintaining are not even activities which register in their minds. Quick and clever coding is all they care about. Long-term reliability isn’t. Well, frankly, you wouldn’t want the control systems of a nuclear power station written by quick-and-clever programmers!

Simplicity and maintainability were ideals that shaped Smalltalk and Modula-2. That’s why Smalltalk worked with inheritance (re-using existing features) and encapsulation. It’s why Modula-2 implemented data-hiding inside hermetically sealed modules.

But maybe the three languages that I’ve highlighted (Smalltalk, Modula-2 and Prolog) were simply too different from the languages that eventually came to dominate the world of programming. Smalltalk was perhaps too insular – there was no separation between the programing language and its environment – and its insistence on simplicity made it hard to change the language to add on significant new features. Prolog programs were too uncontrollable, with their wide-ranging searches for solutions to complex problems. Modula-2 was too restrictively ‘modular’ with its authoritarian insistence on the precise separation of one unit of code from another unit of code.

As a consequence, we now have more mundane languages such as Ruby, Python, Java, C++ and C#, which all mix-and-match ideas from earlier languages but seem to lack any single ‘great idea’ of their own. Perhaps that is what the world really wants – workaday languages that may not be perfect but at least get the job done.

Even so, I refuse to believe that they represent the face of the programming future. One day, surely, someone will have a brilliant idea (and no, I can’t even guess what that might be!) that will dramatically change the way we program. Until then, I can only wonder how different our experience of programming might have been if only Prolog, Smalltalk and Modula-2 had become the big trinity of languages instead of C, C++ and Java.

Monday, 12 August 2019

Prolog – The Logical Choice for Programming

In my last blog post I mentioned a few old programming languages that had big ideas. One of the most ambitious of these was Prolog. Since very few programmers these days have any experience of Prolog, let me explain why it was such a remarkable language.

Prolog was designed to do ‘logic programming’. When I first used Prolog, back in the 1980s, I was initially overwhelmed by its expressive power. When using other (‘procedural’) programming languages, you had to find solutions to all your programming problems at the development stage and then hard-code those solutions into your program. With Prolog, on the other hand, you could ask your program questions at runtime and let it look for one or more possible solutions. Heady stuff. This (I thought) must surely be the way that all programs will be written one day.

Prolog programs are constructed from a series of facts and rules. For example, you could assert that Smalltalk and Ruby are OOP languages by declaring the following ‘facts’:

oop(smalltalk).

oop(ruby).

To find a list of all known OOP languages you would just enter this query:

oop(L).

In Prolog, when an identifier begins with capital letter, this indicates an ‘unbound’ variable which Prolog will try to match with known data. In this case, Prolog replies:

L = smalltalk

L = ruby

Now you can go on to define some rules. For example, let’s says that you want to write the rule that Reba only likes Languages that are OOP as long as Dolly does not like those languages. This is the Prolog rule:

likes(reba,Language) :-

oop(Language),

not(likes(dolly,Language)).

Let’s assume that the program also contains this rule:

likes(dolly, ruby).

You can now enter this query:

likes(reba, L).

The only language returned will be:

L = smalltalk

This is just the tip of the iceberg with Prolog. The language gets really interesting once you start defining enormously complex sets of rules, each of which depends on other rules. The essential idea is that each rule should define some logical proposition. When you write a rule, you can concentrate on a tiny fragment of what might eventually become an amazingly complex set of interdependent propositions. The programmer (in principle) doesn’t have to worry about the ultimate complexity. Instead, as long as each tiny individual rule works, you can rely on the fact that the vast logical network of which they will ultimately form a part will also work. Making sense of that complexity is Prolog’s problem, not the programmer’s.

In principle, Prolog seemed to offer the potential to do ‘real’ AI (programming) of great sophistication. Some people even believed that Prolog would provide the natural path to creating a truly ‘thinking machine’.

Well, years went by and Prolog failed to live up to its promise. Part of the problem was that, while it was great at finding numerous solutions to a problem, it wasn’t so good at finding just one. A nasty little thing called the ‘cut’ (the ! character) was used to stop Prolog searching when once a solution had been found. But the cut, in effect, breaks the logic and scars the beauty (and simplicity) of Prolog. Another problem was that Prolog interpreters were fairly slow. Prolog compilers were created to get around this limitation and Visual Prolog even introduced strict typing (which is not a part of standard Prolog). But in order to gain efficiency, the compiler sacrificed the metaprogramming (self-modifying) capabilities which many people consider fundamental to the language.

In brief, it’s probably fair to say that Prolog’s greatest enthusiasts were simply unrealistic about what the language might achieve. For the time being, Prolog seems to be an interesting diversion in programming history which has not (so far) delivered upon its early promise.

Still, it was, and is, an amazingly ambitious language that did lots of really interesting things. Unlike most of today’s ‘new and better’ programming language, it didn’t just recycle old ideas in slightly new ways. However, there was another programming language that was just as ambitious as Prolog but ultimately far more influential: Smalltalk. That will be the subject of my next post.

Prolog was designed to do ‘logic programming’. When I first used Prolog, back in the 1980s, I was initially overwhelmed by its expressive power. When using other (‘procedural’) programming languages, you had to find solutions to all your programming problems at the development stage and then hard-code those solutions into your program. With Prolog, on the other hand, you could ask your program questions at runtime and let it look for one or more possible solutions. Heady stuff. This (I thought) must surely be the way that all programs will be written one day.

|

| You can try out Prolog online using Swish |

oop(smalltalk).

oop(ruby).

To find a list of all known OOP languages you would just enter this query:

oop(L).

In Prolog, when an identifier begins with capital letter, this indicates an ‘unbound’ variable which Prolog will try to match with known data. In this case, Prolog replies:

L = smalltalk

L = ruby

Now you can go on to define some rules. For example, let’s says that you want to write the rule that Reba only likes Languages that are OOP as long as Dolly does not like those languages. This is the Prolog rule:

likes(reba,Language) :-

oop(Language),

not(likes(dolly,Language)).

Let’s assume that the program also contains this rule:

likes(dolly, ruby).

You can now enter this query:

likes(reba, L).

The only language returned will be:

L = smalltalk

This is just the tip of the iceberg with Prolog. The language gets really interesting once you start defining enormously complex sets of rules, each of which depends on other rules. The essential idea is that each rule should define some logical proposition. When you write a rule, you can concentrate on a tiny fragment of what might eventually become an amazingly complex set of interdependent propositions. The programmer (in principle) doesn’t have to worry about the ultimate complexity. Instead, as long as each tiny individual rule works, you can rely on the fact that the vast logical network of which they will ultimately form a part will also work. Making sense of that complexity is Prolog’s problem, not the programmer’s.

In principle, Prolog seemed to offer the potential to do ‘real’ AI (programming) of great sophistication. Some people even believed that Prolog would provide the natural path to creating a truly ‘thinking machine’.

Well, years went by and Prolog failed to live up to its promise. Part of the problem was that, while it was great at finding numerous solutions to a problem, it wasn’t so good at finding just one. A nasty little thing called the ‘cut’ (the ! character) was used to stop Prolog searching when once a solution had been found. But the cut, in effect, breaks the logic and scars the beauty (and simplicity) of Prolog. Another problem was that Prolog interpreters were fairly slow. Prolog compilers were created to get around this limitation and Visual Prolog even introduced strict typing (which is not a part of standard Prolog). But in order to gain efficiency, the compiler sacrificed the metaprogramming (self-modifying) capabilities which many people consider fundamental to the language.

|

| Visual Prolog has a good editor, a built-in debugger, design tools and compiler - but purists would say that it isn't 'real' Prolog. |

Still, it was, and is, an amazingly ambitious language that did lots of really interesting things. Unlike most of today’s ‘new and better’ programming language, it didn’t just recycle old ideas in slightly new ways. However, there was another programming language that was just as ambitious as Prolog but ultimately far more influential: Smalltalk. That will be the subject of my next post.

Try out Prolog

There are several implementations of Prolog available, both free and commercial. One of the most complete free editions is SWI-Prolog: https://www.swi-prolog.org/ In order to use this with an editor/IDE you should also install the SWI-Prolog Editor: http://arbeitsplattform.bildung.hessen.de/fach/informatik/swiprolog/indexe.html Alternatively, you can write and run short SWI-Prolog programs online here: https://swish.swi-prolog.org/ Visual Prolog has (by far!) the best IDE – complete with editor, debugger, visual designer and compiler. It is available in commercial and free editions. However, this is a non-standard version of Prolog: https://www.visual-prolog.comSunday, 11 August 2019

Why Are There No Big New Ideas in Programming?

Modern mainstream programming languages are all much of a muchness these days. Take some object orientation, add in some ‘dynamic typing’, maybe add on a fast compiler to give you the programming benefits of a ‘scripting language’ with the efficiency benefits of C… I keep reading the same sorts of claims made for all kinds of ‘new’ languages. Far from seeming at all new, they strike me as lots of old stuff mixed up together in different ways.

Where are all the truly new ideas now?

In all the languages I’ve use in the last forty years or so, only three have struck me as having a ‘big vision’ – languages that have a profound belief in the value of their design; and that belief shapes the language itself from start to finish. None of those languages, however, is now widely used. They are: Modula-2, Prolog and Smalltalk.

The be-all and end-all of Modula-2 was its modularity. You put code inside well-defined units called ‘modules’ and once in there, that code cannot be accessed from outside the module unless it is very precisely imported and exported. If you think this sounds like the modules, units and mixins of most other languages, think again. Java, C#, Ruby, Object Pascal and many other languages are far less strict in their modularity. In fact, it has been my experience that most programmers have so little experience of modular programming that they often have great difficulty even trying to understand the concept and the benefits of strict modularity. That’s one reason why – even though Modula-2 itself may have failed to take the world by storm – I think it would be useful to most programmers, whatever languages they usually use, to have at least some experience of programming Modula-2 or its successor, Oberon.

But even more ‘visionary’ than Modula-2 are Prolog and Smalltalk. If you have never programmed in Prolog (and I suppose it must be highly likely that you haven’t) I’ll explain why it was such an exciting and ambitious language in my next post.

Where are all the truly new ideas now?

In all the languages I’ve use in the last forty years or so, only three have struck me as having a ‘big vision’ – languages that have a profound belief in the value of their design; and that belief shapes the language itself from start to finish. None of those languages, however, is now widely used. They are: Modula-2, Prolog and Smalltalk.

The be-all and end-all of Modula-2 was its modularity. You put code inside well-defined units called ‘modules’ and once in there, that code cannot be accessed from outside the module unless it is very precisely imported and exported. If you think this sounds like the modules, units and mixins of most other languages, think again. Java, C#, Ruby, Object Pascal and many other languages are far less strict in their modularity. In fact, it has been my experience that most programmers have so little experience of modular programming that they often have great difficulty even trying to understand the concept and the benefits of strict modularity. That’s one reason why – even though Modula-2 itself may have failed to take the world by storm – I think it would be useful to most programmers, whatever languages they usually use, to have at least some experience of programming Modula-2 or its successor, Oberon.

But even more ‘visionary’ than Modula-2 are Prolog and Smalltalk. If you have never programmed in Prolog (and I suppose it must be highly likely that you haven’t) I’ll explain why it was such an exciting and ambitious language in my next post.

Wednesday, 7 August 2019

Writing a Retro Text Adventure in Delphi

It’s no secret that I am a keen adventure gamer. I love playing them. I love programming them. The traditional type of text adventure (sometimes called ‘Interactive Fiction’) is a bit like a book in which the game-player is a character. You walk around the world entering human language commands to “Look at” objects, “Take” and “Drop” them, move around the world to North, South, East and West (and maybe Up and Down too). Unlike modern graphics games, an adventure game can realistically be coded from start to finish by a single programmer with no need to use complicated ‘game frameworks’ to help out.

|

| While my Delphi adventure game has a graphical user interface it is, in essence, a text adventure just like the 70s and 80s originals |

Programming a game is not a trivial task. If you are using an object oriented language, you need to create class hierarchies in order to create treasures, rooms, a map and a moveable player. You need good list-management to let the player take objects from rooms and add them to the player’s inventory. And you need to have robust File/IO routines to let you save and reload the entire ‘game state’.

If you really want to understand all the techniques of adventure game programming, I have an in depth course that will guide you through every step of the way using the C# language in Visual Studio.

But you don’t have to use C#. I’ve written similar games in a variety of languages: Ruby, Java, ActionScript, Prolog and Smalltalk to name just a few. Recently one of the students of my C# game programming course asked for some help with writing a game in Object Pascal (the native language of Delphi, and also of Lazarus). OK, so here goes…

|

| Delphi lets you design, compile and debug Object Pascal applications |

This is not a tutorial on how to use Delphi. I am therefore assuming that you already know how to create a new VCL application, design a user interface and do basic coding. If you don’t, Embarcadero has some tutorials here: http://www.delphibasics.co.uk/

If you prefer an online video-based tutorial with all the source code, you can get a special deal on my Delphi & Object Pascal Course by clicking this link.

The Adventure Begins

First I designed a simple user interface with buttons and a text field to let me enter the name of an object that I want to take or drop. The main game output is displayed in a TRichEdit box which I’ve named DisplayBox.Now, I want to create the basic objects for my game. I want a base Thing class which has a name and a description. All other classes in my game derive from Thing. Then I want a Room class to define a location and an Actor class for interactive characters – notably the player. I could put all these classes into a single code file. In fact, I prefer to put them into their own code files, so I’ve created the three files: ThingUnit.pas, RoomUnit.pas and ActorUnit.pas.

Here are the contents of those files:

unit ThingUnit;

interface

type

Thing = class(TObject)

private // hide data

_name: shortstring;

_description: shortstring;

public

constructor Create(aName, aDescription: shortstring);

destructor Destroy; override;

property Name: shortstring read _name write _name;

property Description: shortstring read _description write _description;

end;

implementation

constructor Thing.Create(aName, aDescription: shortstring);

begin

inherited Create;

_name := aName;

_description := aDescription;

end;

destructor Thing.Destroy;

begin

inherited Destroy;

end;

end.

unit ActorUnit;

interface

uses ThingUnit, RoomUnit;

type

Actor = class(Thing)

private

_location: Room;

public

constructor Create(aName, aDescription: shortstring; aLocation: Room);

destructor Destroy; override;

property Location: Room read _location write _location;

end;

implementation

constructor Actor.Create(aName, aDescription: shortstring; aLocation: Room);

begin

inherited Create(aName, aDescription);

_location := aLocation;

end;

destructor Actor.Destroy;

begin

inherited Destroy;

end;

end.

unit RoomUnit;

interface

uses ThingUnit;

type

Room = class(Thing)

private

_n, _s, _w, _e: integer;

public

constructor Create(aName, aDescription: shortstring;

aNorth, aSouth, aWest, anEast: integer);

destructor Destroy; override;

property N: integer read _n write _n;

property S: integer read _s write _s;

property W: integer read _w write _w;

property E: integer read _e write _e;

end;

implementation

{ === ROOM === }

constructor Room.Create(aName, aDescription: shortstring;

aNorth, aSouth, aWest, anEast: integer);

begin

inherited Create(aName, aDescription);

_n := aNorth;

_s := aSouth;

_w := aWest;

_e := anEast;

end;

destructor Room.Destroy;

begin

inherited Destroy;

end;

end.

And this is the code in the main file, gameform,pas. Note that the button event-handlers such as TMainForm.NorthBtnClick were created using the Delphi events panel prior to adding code to those methods:

unit gameform;

interface

uses

Winapi.Windows, Winapi.Messages, System.SysUtils, System.Variants,

System.Classes, Vcl.Graphics,

Vcl.Controls, Vcl.Forms, Vcl.Dialogs, Vcl.StdCtrls, Vcl.ExtCtrls,

Vcl.ComCtrls,

ThingUnit, RoomUnit, ActorUnit;

type

TMainForm = class(TForm)

DisplayBox: TRichEdit;

NorthBtn: TButton;

SouthBtn: TButton;

WestBtn: TButton;

EastBtn: TButton;

LookBtn: TButton;

CheckObBtn: TButton;

TestSaveBtn: TButton;

TestLoadBtn: TButton;

Panel1: TPanel;

DropBtn: TButton;

TakeBtn: TButton;

inputEdit: TEdit;

InvBtn: TButton;

procedure FormCreate(Sender: TObject);

procedure LookBtnClick(Sender: TObject);

procedure SouthBtnClick(Sender: TObject);

procedure NorthBtnClick(Sender: TObject);

procedure WestBtnClick(Sender: TObject);

procedure EastBtnClick(Sender: TObject);

private

{ Private declarations }

procedure CreateMap;

public

procedure Display(msg: string);

procedure MovePlayer(newpos: Integer);

{ Public declarations }

end;

var

MainForm: TMainForm;

room1, room2, room3, room4: Room;

map: array [0 .. 3] of Room;

player: Actor;

implementation

{$R *.dfm}

procedure TMainForm.Display(msg: string);

begin

DisplayBox.lines.add(msg);

end;

procedure TMainForm.CreateMap;

begin

room1 := Room.Create('Troll Room', 'a dank, dark room that smells of troll',

-1, 2, -1, 1);

room2 := Room.Create('Forest',

'a light, airy forest shimmering with sunlight', -1, -1, 0, -1);

room3 := Room.Create('Cave',

'a vast cave with walls covered by luminous moss', 0, -1, -1, 3);

room4 := Room.Create('Dungeon',

'a gloomy dungeon. Rats scurry across its floor', -1, -1, 2, -1);

map[0] := room1;

map[1] := room2;

map[2] := room3;

map[3] := room4;

player := Actor.Create('You', 'The Player', room1);

end;

procedure TMainForm.FormCreate(Sender: TObject);

begin

CreateMap;

end;

procedure TMainForm.LookBtnClick(Sender: TObject);

begin

Display('You are in ' + player.Location.Name);

Display('It is ' + player.Location.Description);

end;

procedure TMainForm.MovePlayer(newpos: Integer);

begin

if (newpos = -1) then

Display('There is no exit in that direction')

else

begin

player.Location := map[newpos];

Display('You are now in the ' + player.Location.Name);

end;

end;

procedure TMainForm.NorthBtnClick(Sender: TObject);

begin

MovePlayer(player.Location.N);

end;

procedure TMainForm.SouthBtnClick(Sender: TObject);

begin

MovePlayer(player.Location.S);

end;

procedure TMainForm.WestBtnClick(Sender: TObject);

begin

MovePlayer(player.Location.W);

end;

procedure TMainForm.EastBtnClick(Sender: TObject);

begin

MovePlayer(player.Location.E);

end;

end.

So this is already a good basis for the development of an adventure game in Delphi. I have a map (an array) or rooms and a player (an Actor object) capable of moving around. There’s still much to do, however – for example, I need some way of taking and dropping objects and saving and restoring a game. I’ll look at those problems in a future article.

If you are seriously interested in programming text adventure games or learning Delphi, here are special deals on two of my programming courses.

Learn To Program an Adventure Game In C#

- Write a retro-style adventure game like ‘Zork’ or ‘Colossal Cave’

- Master object orientation by creating hierarchies of treasure objects

- Create rooms and maps using .NET collections, arrays and Dictionaries

- Create objects with overloaded and overridden methods

- Serialize networks of data to save and restore games

- Write modular code using classes, partial classes and subclasses

- Program user interaction with a ‘natural language’ interface

- Plus: encapsulation, inheritance, constructors, enums, properties, hidden methods and much more…

https://bitwisecourses.com/p/adventures-in-c-sharp-programming/?product_id=943143&coupon_code=BWADVDEAL

Learn To Program Delphi

- 40+ lectures, over 6 hours of video instruction teaching Object Oriented programming with Pascal

- Downloadable source code

- A 124-page eBook, The Little Book Of Pascal, explains all the topics in depth

Saturday, 3 August 2019

The Terrible Visual Studio 2019 New Project Dialog

Gahhhh! Why do Microsoft make changes that nobody asks for and nobody wants? A couple of years ago they suddenly put all the Visual Studio menus in capital letters (they undid that change after mass protests). Now in Visual Studio 2019, they've replaced a perfectly neat and functional New Project dialog with a sprawling, disorderly mess. I hope, oh how I hope, that this dialog will soon go the way of the capital-letter menus. In the meantime, if you can't work your way around it, I've just written this short guide on the Bitwise Books web site: http://bitwisebooks.com/visual-studio-2019-using-its-strange-new-project-dialog/

Monday, 29 July 2019

Value, Reference and Out Parameters in C# Programming

Confused by all the parameter types in C#? here’s a quick guide to help you sort out the differences between value, reference and out parameters. This is an extract from my book, The Little Book Of C#.

By default, when you pass variables to functions or methods these are passed as ‘copies’. That is, their values are passed as arguments and these values are assigned to the corresponding parameters declared by the function. Any changes made within the method will affect only the copies (the parameters) within the scope of the method. The original variables that were passed as arguments (and which were declared outside the method) retain their original values.

Sometimes, however, you may in fact want any changes that are made to parameters inside a method to change the matching variables (the arguments) in the code that called the method. In order to do that, you can pass variables ‘by reference’. When variables are passed by reference, the original variables (or, to be more accurate, the references to the location of those variables in your computer’s memory) are passed to the function. So any changes made to the parameter values inside the function will also change the variables that were passed as arguments when the function was called.

To pass arguments by reference, both the parameters defined by the function and the arguments passed to the function must be preceded by the keyword ref. The following examples should clarify the difference between ‘by value’ and a ‘by reference’ arguments. In each case, I assume that two int variables have been declared like this:

int firstnumber;

int secondnumber;

firstnumber = 10;

secondnumber = 20;

num1 = 0;

num2 = 1;

}

This method might be called like this:

ByValue(firstnumber, secondnumber);

Remember that firstnumber had the initial value of 10, and secondnumber had the initial value of 20. Only the copies (the values of the parameters, num1 and num2) were changed in the ByValue() method. So, after I call that method, the values of the two variables that I passed as arguments are unchanged:

firstnumber now has the value 10.

secondnumber now has the value 20.

num1 = 0;

num2 = 1;

}

This method might be called like this:

ByReference(ref firstnumber, ref secondnumber);

Once again, firstnumber has the initial value of 10, and secondnumber has the initial value of 20. But these are now ref parameters, so the parameters num1 and num2 ‘refer’ to the original variables. When changes are made to the parameters, the original variables are also changed:

firstnumber now has the value 0.

secondnumber now has the value 1.

You may also use out parameters which must be preceded by the out keyword instead of the ref keyword.

num1 = 0;

num2 = 1;

}

This method might be called like this:

int firstnumber;

int secondnumber;

OutParams(out firstnumber, out secondnumber);

In this case, as with ref parameters, the values of the variables that were passed as arguments are changed when the values of the parameters are changed:

firstnumber now has the value 0.

secondnumber now has the value 1.

At first sight, out parameters may seem similar to ref parameters. However, it is not obligatory to assign a value to a variable passed as an out argument before you pass it to a method. It is obligatory to assign a value to a variable passed as a ref argument.

You can see this in the example shown above. I do not initialize the values of firstnumber and secondnumber before calling the OutParams() method. That would not be permitted if I were using ordinary (by value) or ref (by reference) parameters. On the other hand, it is obligatory to assign a value to an out parameter within the method that declares that parameter. This is not obligatory with a ref argument.

If you need a complete guide to C# programming, my book, The Little Book Of C# is available on Amazon (US), Amazon (UK) and worldwide.

By default, when you pass variables to functions or methods these are passed as ‘copies’. That is, their values are passed as arguments and these values are assigned to the corresponding parameters declared by the function. Any changes made within the method will affect only the copies (the parameters) within the scope of the method. The original variables that were passed as arguments (and which were declared outside the method) retain their original values.

Sometimes, however, you may in fact want any changes that are made to parameters inside a method to change the matching variables (the arguments) in the code that called the method. In order to do that, you can pass variables ‘by reference’. When variables are passed by reference, the original variables (or, to be more accurate, the references to the location of those variables in your computer’s memory) are passed to the function. So any changes made to the parameter values inside the function will also change the variables that were passed as arguments when the function was called.

To pass arguments by reference, both the parameters defined by the function and the arguments passed to the function must be preceded by the keyword ref. The following examples should clarify the difference between ‘by value’ and a ‘by reference’ arguments. In each case, I assume that two int variables have been declared like this:

int firstnumber;

int secondnumber;

firstnumber = 10;

secondnumber = 20;

Example 1: By Value Parameters

private void ByValue(int num1, int num2) {num1 = 0;

num2 = 1;

}

This method might be called like this:

ByValue(firstnumber, secondnumber);

Remember that firstnumber had the initial value of 10, and secondnumber had the initial value of 20. Only the copies (the values of the parameters, num1 and num2) were changed in the ByValue() method. So, after I call that method, the values of the two variables that I passed as arguments are unchanged:

firstnumber now has the value 10.

secondnumber now has the value 20.

Example 2: By Reference Parameters

private void ByReference(ref int num1, ref int num2) {num1 = 0;

num2 = 1;

}

This method might be called like this:

ByReference(ref firstnumber, ref secondnumber);

Once again, firstnumber has the initial value of 10, and secondnumber has the initial value of 20. But these are now ref parameters, so the parameters num1 and num2 ‘refer’ to the original variables. When changes are made to the parameters, the original variables are also changed:

firstnumber now has the value 0.

secondnumber now has the value 1.

You may also use out parameters which must be preceded by the out keyword instead of the ref keyword.

Example 3: out Parameters

private void OutParams(out int num1, out int num2) {num1 = 0;

num2 = 1;

}

This method might be called like this:

int firstnumber;

int secondnumber;

OutParams(out firstnumber, out secondnumber);

In this case, as with ref parameters, the values of the variables that were passed as arguments are changed when the values of the parameters are changed:

firstnumber now has the value 0.

secondnumber now has the value 1.

At first sight, out parameters may seem similar to ref parameters. However, it is not obligatory to assign a value to a variable passed as an out argument before you pass it to a method. It is obligatory to assign a value to a variable passed as a ref argument.

You can see this in the example shown above. I do not initialize the values of firstnumber and secondnumber before calling the OutParams() method. That would not be permitted if I were using ordinary (by value) or ref (by reference) parameters. On the other hand, it is obligatory to assign a value to an out parameter within the method that declares that parameter. This is not obligatory with a ref argument.

If you need a complete guide to C# programming, my book, The Little Book Of C# is available on Amazon (US), Amazon (UK) and worldwide.

Saturday, 27 July 2019

Learn C# (C-Sharp) In A Day

I know, I know. Unless you are a super-fast learner, you really won't be able to learn very much C# in one day. But if you follow the examples in my new book, you will definitely be able to start writing programs in your first day of study. This is the latest in my series of Little Books of programming. My aim is to keep them short, focused and practical. I know you can get a ton of information online so there's no point padding out these books with class library and syntax references. Instead, each book aims to get you writing - and understanding - programs right away...

This book will teach you to program the C# language from the ground up. You will learn about Object Orientation, classes, methods, generic lists and dictionaries, file operations and exception-handling. Whether you are a new programmer or an experienced programmer who wants to learn the C# language quickly and easily, this is the book for you!

This book explains...

This book will teach you to program the C# language from the ground up. You will learn about Object Orientation, classes, methods, generic lists and dictionaries, file operations and exception-handling. Whether you are a new programmer or an experienced programmer who wants to learn the C# language quickly and easily, this is the book for you!

This book explains...

- Fundamentals of C#

- Object Orientation

- Static Classes and Methods

- Visual Studio & .NET

- Variables, Types, Constants

- Operators & Tests

- Methods & Arguments

- Constructors

- Acess Modifiers

- Arrays & Strings

- Loops & Conditions

- Files & Directories

- structs & enums

- Overloaded and overridden methods

- Exception-handling

- Lists & Generics

- ...and much more

Buy on Amazon.com, Amazon.co.uk and worldwide.

Tuesday, 16 July 2019

Is an Array in C a Pointer?

In some programming languages, arrays are high-level ‘objects’ and the programmer can think of them simply as ordered lists. In C, you have to deal with arrays ‘as they really are’ because C doesn’t try to hide what is going on ‘close to the metal’. One of the common misconceptions (which I’ve read so many times in books and on web sites that I almost started to believe it was true!) is that array ‘variables’ are ‘special types of pointer’. Well, they aren’t. Not only that, array identifiers aren’t even variables.

Let me explain. Let’s assume you’ve declared an array of chars (C’s version of a string) called str1 and a pointer to an array of chars, str2:

char str1[] = "Hello";

char *str2 = "Goodbye";

An array and an address (in C) are equivalent. So str1 is the address at which the array of characters in the string "Hello" are stored. But str2 is a pointer whose value is the address of the string "Goodbye".

In fact, str1 isn’t a variable because its value (the address of an array) cannot be changed. The contents of the array – its individual elements – can be changed. The address of the array, however, cannot. That is why I prefer to call str1 an array ‘identifier’, though many people would call it, somewhat inaccurately, an ‘array variable’.

But, wait a moment. If the value of an array identifier such as char str1[] and the value of a pointer variable such as char *str2 are both addresses, aren’t str1 and str2 both pointers?

No, they are not.

It is an essential feature of a variable that its value can be changed. The value of an array identifier cannot be changed. What’s more, a pointer variable occupies one address; its value can be set to point to different addresses. But an array identifier and its address are one and the same thing. How can that be?

You have to understand what happens during compilation. When your program is compiled, the array identifier, str1, is replaced by the address of the array. That address cannot be changed when your program is run. But str2 is a pointer variable with its own address. Its value (the address of an array) can change if new addresses are assigned to the pointer variable.

If you need to know more about the mysteries of pointers, arrays and addresses in C, I have a book that explains everything (with all the source code examples for you to download). It’s called The Little Book Of Pointers and it’s available as a paperback or eBook from Amazon (US), from Amazon (UK) and other Amazon stores worldwide.

Let me explain. Let’s assume you’ve declared an array of chars (C’s version of a string) called str1 and a pointer to an array of chars, str2:

char str1[] = "Hello";

char *str2 = "Goodbye";

An array and an address (in C) are equivalent. So str1 is the address at which the array of characters in the string "Hello" are stored. But str2 is a pointer whose value is the address of the string "Goodbye".

In fact, str1 isn’t a variable because its value (the address of an array) cannot be changed. The contents of the array – its individual elements – can be changed. The address of the array, however, cannot. That is why I prefer to call str1 an array ‘identifier’, though many people would call it, somewhat inaccurately, an ‘array variable’.

But, wait a moment. If the value of an array identifier such as char str1[] and the value of a pointer variable such as char *str2 are both addresses, aren’t str1 and str2 both pointers?

No, they are not.

It is an essential feature of a variable that its value can be changed. The value of an array identifier cannot be changed. What’s more, a pointer variable occupies one address; its value can be set to point to different addresses. But an array identifier and its address are one and the same thing. How can that be?

You have to understand what happens during compilation. When your program is compiled, the array identifier, str1, is replaced by the address of the array. That address cannot be changed when your program is run. But str2 is a pointer variable with its own address. Its value (the address of an array) can change if new addresses are assigned to the pointer variable.

If you need to know more about the mysteries of pointers, arrays and addresses in C, I have a book that explains everything (with all the source code examples for you to download). It’s called The Little Book Of Pointers and it’s available as a paperback or eBook from Amazon (US), from Amazon (UK) and other Amazon stores worldwide.

Friday, 12 July 2019

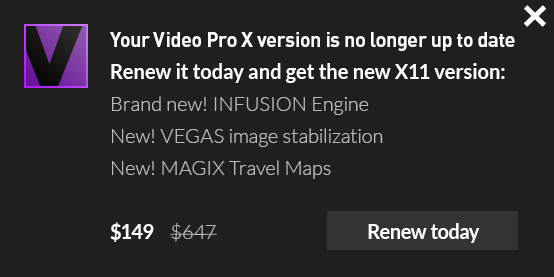

MAGIX PopUp Ads – how to get rid of them

They are like a virus. They infect your computer and make a damn’ nuisance of themselves by popping up adverts, special offers, upgrade deals and, well, more adverts… Upgrade MovieStudio, Buy Sounds for ACID, Download Stuff for VEGAS, Install Junk I really don’t want for MAGIX Music Maker. The damned adverts pop up at the bottom of the screen almost every time I boot up the computer. If there was ever a way to make the customer hate your products, this is it!

Actually, I rather like many MAGIX products. But their persistent, irritating, spammy popup adverts are doing their best to make me change my opinion.

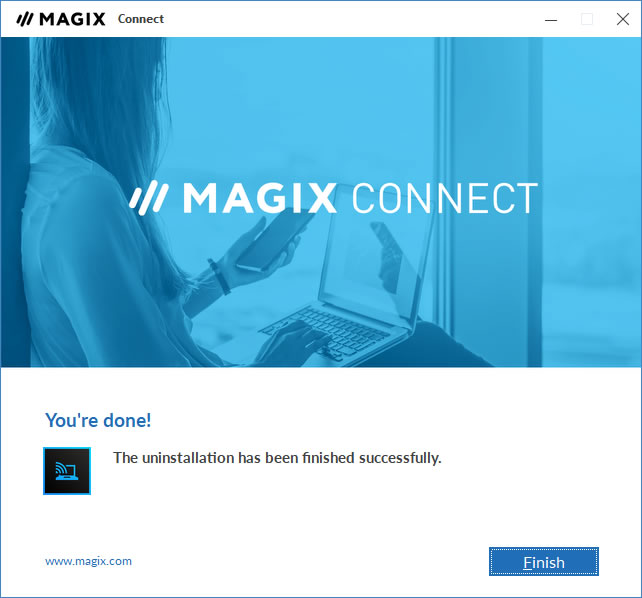

But how do I get rid of them? I couldn’t see an option anywhere to “Disable our Spamware”. I ended up having to Google for help. I eventually found that I have to uninstall a piece of junkware called MAGIX Connect. Go to Settings, Apps, MAGIX Connect, Uninstall.

Hurrah! Now the blasted adverts are gone. What I find truly mysterious about this is that MAGIX can’t see the obvious truth that, far from promoting its software, these nasty, trashy, annoying popups are about the worst sort of bad publicity they could possibly have. As I said, their software is generally good. But as for their Spamware…!!!!

|

| Does anyone really want to see these ads popping up on their PC every day??? |

|

| Another day, another ad!!! |

|

| Oh joy! Gone at last! |

Subscribe to:

Posts (Atom)